因果: Reflections on a PhD Journey

Every action sets something in motion. We just rarely get to see where the chain leads until much later.

This post is also available in Chinese (中文版).

A Simulated World

I recently watched Pantheon on Netflix. It’s a show about uploaded intelligence — people who exist somewhere between physical bodies and digital worlds, living out their consciousness in simulated spaces that feel as real as anything made of flesh. The premise is wild, but what stayed with me had nothing to do with the technology. It was something simpler, something the show kept circling back to: when everything around you could be constructed, could be simulated, what is actually real?

I kept thinking about that question long after the credits rolled. And the answer I kept arriving at was always the same: the connections between people. Not the environment you’re in. Not the output you produce. Not the world’s judgment of whether your time was well spent. What’s real — what endures — is the thread that runs between you and another person, and the quiet, unpredictable ways those threads pull on the rest of your life.

That idea maps surprisingly well onto my PhD journey. We spend so much time evaluating our choices by surface-level criteria. Was that internship productive? Did that paper get in? Was that the right research direction? But the real consequences of our decisions — the ones that actually reshape the trajectory of a life — often have nothing to do with those metrics. They hide in people you meet over drinks, in conversations you barely remember having, in friendships that lie dormant for years before suddenly mattering more than anything.

In Chinese, there’s a concept called 因果 — cause and effect. It’s not karma in the pop-culture sense of cosmic scorekeeping. It’s something quieter: the idea that every action plants a seed, and you won’t know what it grows into until much later, sometimes years later, sometimes in ways you never could have imagined.

This essay is my attempt to trace a few of those chains. Not to draw lessons — I’m suspicious of lessons — but just to look back and notice the patterns that only become visible in retrospect.

Seattle: The “Wasted” Summer

Before my PhD, I interned at Amazon in Seattle. By any conventional measure, it was unremarkable. The project didn’t excite me, resources were limited, and I knew early on that I wasn’t going to produce anything of real research significance. So rather than grind through the motions, I let myself drift. I explored the city. I spent long evenings out with people I’d just met. I relaxed in a way I hadn’t allowed myself to in years.

From a career-optimization standpoint, it looked like a wasted summer.

But one of the friends I made during those meandering Seattle evenings would, two years later, introduce me to a girl at a conference dinner. That girl is now my fiancée.

I think about this a lot. If you’d told me at the time that the single most consequential thing I’d do that summer was grab drinks with a colleague, I’d have laughed. The internship felt like a footnote. In reality, it was the opening sentence of a story I didn’t know I was writing. That’s 因果 at work. The value of that time had nothing to do with project deliverables. It lived entirely in the human connections I stumbled into — connections whose meaning wouldn’t surface for years.

The Difficult Year

After my first year of the PhD, I moved to New York for an internship at Meta. That’s when I started working on multimodal research in earnest — speech-language modeling, audiovisual understanding. It was my entry into the area that would define much of my PhD, and the research itself was solid enough to point me toward a real direction.

But what I remember most about that period is not the research.

I was going through a breakup. I was emotionally all over the place, trying to hold myself together while simultaneously trying to build a new research identity in a new city. It’s the kind of thing that never appears on a CV, but it colors everything — how you think, how you work, how you show up to a meeting at 10 a.m. when you barely slept.

It was during this difficult stretch, on a conference trip, that my Amazon-era friend introduced me to the woman who would become my fiancée. We started a China–US long-distance relationship with no guarantees, neither of us fully sure it could survive the distance. But it did. And it would go on to reshape nearly every major decision I made from that point forward.

The Wilderness

After Meta, I returned to Baltimore. I was back in the familiar rhythm of PhD life, extending my work on audiovisual language modeling, but something had shifted. My heart wasn’t in it anymore. The project had drifted away from what I actually cared about, and a deeper disillusionment was settling in — the kind that doesn’t arrive suddenly but accumulates, day by day, like dust.

For the first time, I felt the gap between academic and industry ML research not as an abstract complaint but as a lived reality. The difference in compute, in data, in iteration speed — it was enormous. Working on multimodal research in an academic lab sometimes felt like bringing a knife to a gunfight. I’d read about what industry teams were building and wonder whether anything I did in my small corner could possibly matter.

I’ll be honest about what that period looked like from the inside: I spent maybe half my day on research and the other half learning options trading and doing day trades. Partly because I’d genuinely developed an interest in finance. But mostly because I was bored, restless, and losing my connection to the work. My life in suburban Baltimore was quiet in a way that felt more like emptiness than peace — aside from a few close friends, there wasn’t much to anchor me.

This lasted about six months, from early 2025 into the summer. I suspect many PhD students go through some version of this wilderness — a stretch where the motivation drains out and the whole project starts to feel like an elaborate exercise in futility. People don’t talk about it much. I want to name it here, not because I have advice for getting through it, but because pretending it didn’t happen would make the rest of this story dishonest.

Going Back

In the summer of 2025, I took a position at ByteDance. The reason had nothing to do with career strategy. It was simple, almost embarrassingly so: I wanted to go back to Shanghai to be with my girlfriend.

ByteDance happened to be the company that could offer me a relevant research problem while also putting me where I needed to be personally. So I went. I didn’t know if it was the right move. I only knew what mattered to me, and I decided to trust that.

What happened next was 因果 at its most dramatic.

I arrived just as the field entered one of its most transformative periods. The agent paradigm was accelerating fast. The problems landing on my desk — multimodal reasoning, multimodal generation — were more alive, more relevant, and more exciting than anything I’d worked on before. The world had shifted, and by pure accident of timing and personal choice, I was standing in exactly the right place.

A decision made for love turned out to be the best research decision of my PhD. I couldn’t have planned it. I didn’t plan it. But cause and effect doesn’t care about your plans — it just keeps moving.

A Text Message

A few weeks ago, I got a text from a student I’d mentored a couple of years back. He was an undergraduate, unsure whether to go into software engineering or pursue a PhD. We’d talked a few times — nothing structured, just honest conversations about trade-offs, about what he actually wanted, about the difference between what looks impressive and what feels right.

I’d mostly forgotten about it.

Two years later, he wrote to thank me. He said he’d pushed himself hard, hit milestones he hadn’t been sure he could reach, and now had opportunities he was genuinely excited about. He said those early conversations had been a turning point.

I sat with that message for a while. To me, it had been just a conversation — the kind you have over coffee and don’t think about again. To him, it was a fork in the road.

This is the piece of 因果 I keep coming back to. You can’t know which of your actions will matter. A casual conversation might redirect someone’s entire trajectory. An aimless summer might lead you to the person you’ll marry. A decision made because you missed someone might drop you into the most important work of your career. We scatter seeds without knowing it, and we don’t get to choose which ones take root.

Research as Cause and Effect

My research trajectory, like everything else, was never really planned. It evolved through a series of turns, each one driven as much by circumstance as by intention.

It started with multimodal understanding — teaching language models to reason over audio and visual inputs. How do you take signals from different senses and make sense of them together? That was the foundation, the first question that grabbed me.

From there I moved into planning and tool use. If a model can understand what it sees and hears, what does it do with that understanding? How does it decide to act, call a tool, interact with the world? The questions shifted from perception to agency.

Then came generation. Starting in late 2025, I became interested in the other direction — not understanding media but producing it. How do you use reinforcement learning to make generated content better, more controllable, more aligned with what people actually want?

And now all of it is converging into voice agents.

At the time, each transition felt abrupt, even a little disorienting. I’d be deep in one area and suddenly find myself pulled toward something adjacent. But looking back, each phase was quietly building on the last in ways I couldn’t see while living through them. Simultaneous translation taught me about trigger points — the problem of deciding when enough information has accumulated to justify action. Multimodal understanding taught me how to fuse signals across modalities. Agent research taught me about tools and real-world interaction.

All of it feeds into how I think about voice agents now. The path only makes sense in reverse.

Where Voice Agents Need to Go

Here I want to shift from looking backward to looking forward. I don’t have all the answers — I’m standing at another inflection point, and I can’t see the consequences clearly yet. But I do have a strong sense of what’s missing.

The infrastructure is catching up fast. The building blocks for capable voice agents — memory systems, evaluation frameworks, tool connectivity, real-world interfaces — are improving at a pace that would have seemed implausible a year ago. I don’t think infrastructure will be the bottleneck for much longer.

What will be the bottleneck is something subtler.

Today, voice systems are split into two separate worlds. On one side, you have the conversational pipeline — speech in, expressive speech out, optimized for low latency and natural-sounding interaction. On the other, you have the functional pipeline — detect intent, call APIs, retrieve results, execute tasks. These two paradigms are built separately, evaluated separately, and thought about separately.

But that separation doesn’t reflect how humans actually communicate. Real conversation doesn’t split neatly into “chatting” and “commanding.” It flows between the two, and much of it lives in the ambiguous middle — where intent is only half-formed, where you’re thinking out loud but also sort of hoping someone will pick up on what you need.

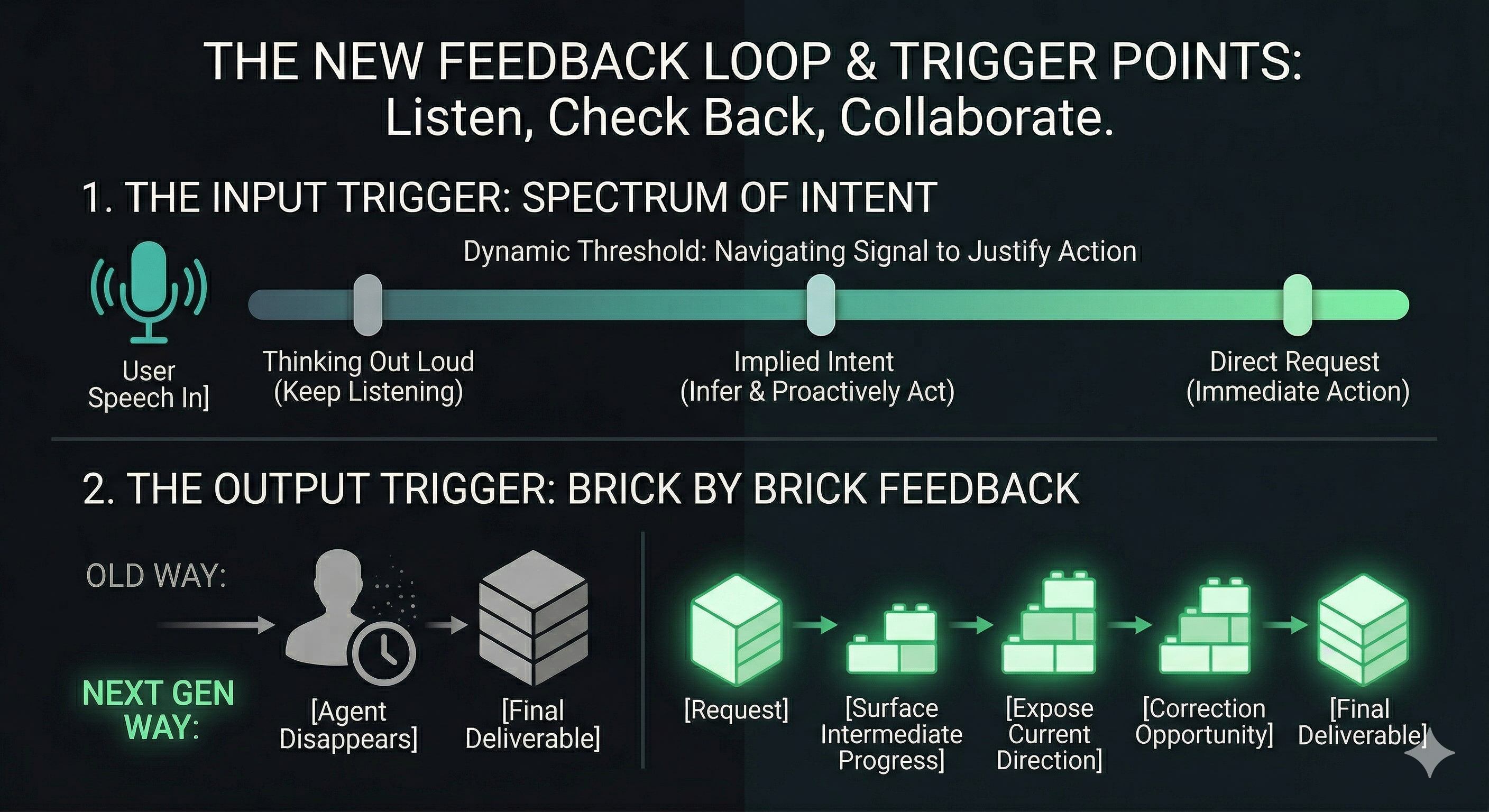

The real challenge is designing the feedback loop. This is what I think will define the next generation of voice agents, and it comes down to two trigger-point problems.

The first is the input trigger: when has the user said enough to justify the agent taking action? I know this problem intimately from simultaneous translation, where the system must constantly decide whether enough words have accumulated to start generating a translation. But for voice agents, the problem is much harder, because you’re not just translating — you’re trying to infer intent along a continuous spectrum. Sometimes the user is just thinking out loud. Sometimes they’re giving a direct command. And often they’re somewhere in between, talking loosely but hoping the agent will figure out what they need and step in at the right moment. A good voice agent has to read all of this in real time.

The second is the output trigger: how does the agent communicate while it works? Current agents tend to go dark during processing — you give them a task, they disappear, and eventually they return with a finished result. Even when agents offer plan-mode clarification questions, they’re still far from the interactiveness we’d expect from a real human collaborator. This pattern is fundamentally wrong. Good collaboration doesn’t work that way. A good collaborator checks in, shows drafts, surfaces progress, creates openings for course correction. Voice agents should work the same way — delivering feedback brick by brick, not vanishing until the job is done.

The harness, not the model, is the product. Models will keep getting better. Tools and APIs will keep multiplying. But what will actually separate a great voice agent from a mediocre one is the harness — the system-level architecture that governs when to listen, when to act, when to interrupt, when to check back, and how to manage the concurrent streams of input, processing, and response. That’s where the real product differentiation lives.

Startups see this more clearly than big labs. This has been one of the most striking things I’ve noticed in conversations with people across the industry. Startups — building for real users in specific domains like gaming, therapy, or enterprise workflows — tend to have a much clearer picture of what actually needs to exist. Not because they have better researchers, but because they’re close enough to users to see where the interaction breaks down. Big labs, including ones I’ve worked at, tend to focus on the model itself: pushing benchmarks, expanding capabilities, publishing results. But we’re often too far from the messy reality of how people actually want to use these systems. The feedback-loop problem isn’t something you find in a paper. You find it by watching someone try to use a voice agent for a real task and noticing exactly where they get frustrated.

This is the mindset shift I’ve been going through lately: from how do I make the model better? to how do I make the interaction right? The two questions sound similar. In practice, they lead to very different research and very different products.

No Conclusions

I started this essay thinking I might arrive at something tidy — a lesson, a framework, a piece of advice. I didn’t.

You can’t optimize for 因果. You can’t engineer the chain of cause and effect to produce the outcomes you want. You can only stay open to connection, trust that the chain keeps extending beyond what you can see, and try to remain honest with yourself about what you actually care about — not what looks good, not what maximizes some short-term metric, but what matters for your well-being and the kind of life you want to live.